Safe Robot Learning

Learning can be used to improve the performance of a robotic system in a complex environment. However, providing safety guarantees during the...

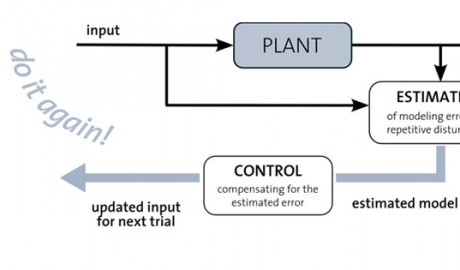

Foundations of Robot Learning, Control, and Planning

Learning algorithms hold great promise for improving a robot's performance whenever a-priori models are not sufficiently accurate. We have developed...

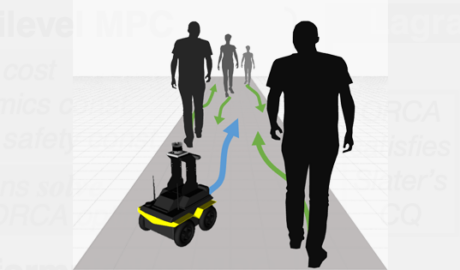

Crowd Navigation

Robots are required to navigate among humans to achieve a variety of service tasks, such as food delivery and autonomous wheelchair navigation....

Self-Driving Vehicles

As part of the SAE Autodrive Challenge, students in our lab will be working on designing, developing, and testing a self-driving car over the next...

Vision-Based Autonomous Flight

We use vision to achieve robot localization and navigation without using external infrastructure. Our ground robot experiments localize based on 3D...

Aerial and Ground Robot Racing

This project explores the physical limits of ground and aerial robots. When operating robots in these regimes, unknown dynamic effects (for example,...